- Open Source CEO by Bill Kerr

- Posts

- Building Smarter Models with Cheaper Tokens

Building Smarter Models with Cheaper Tokens

An interview with Benny Chen, Co-Founder at Fireworks AI. 🎆

👋 Howdy to the 4,003 new legends who joined since our last edition! You are now part of a 408,588 strong tribe outperforming the competition together.

LATEST POSTS 📚

If you’re new, not yet a subscriber, or just plain missed it, here are some of our recent editions.

🍰 What’s the story with Perplexity Computer? It's now possible to have your AI cake and eat it too.

🪐 What Changes After Your Exit (Nothing?). An interview with Ronan Berder, exited-Founder & CEO at Wiredcraft.

💆🏻 The Ultimate Founder Mental Health Stack. Psychedelics, depression drugs, dog walks, training, meditation, Whoop and loads more.

PARTNERS 💫

With Granola, the AI Notepad for people with back-to-back meetings, you can avoid context switching, the cognitive load of remembering what you promised, and the stress of knowing something important slipped through.

Take notes the way you always have. Granola works in the background, turning conversations into clear summaries, action items, and next steps. Before, during, or after a meeting, you can chat with your notes.

Most people don’t struggle with tools—they struggle with knowing what to do next. (Or where to start!)

With seemingly endless possibilities within HighLevel, I found myself in that last category. What finally changed for me was discovering the 5 Day Challenge. Five days of learning the system and how to achieve some quick wins in my business gave me all the momentum I needed.

Ready to experience the same momentum for yourself? Join the next session of the 5 Day Challenge.

Interested in sponsoring these emails? See our partnership options here.

HOUSEKEEPING 📨

If you are a 90s kid (grew up, not born), Scottie Pippen is pretty familiar to you, to say the least. Riding shotgun to MJ through the Bulls’ championships with his lockdown defense and all-around play. Today, Scottie is more of an internet meme, dissing his partnership with His Airness, and even going as far as to claim he had spoken directly with Satoshi Nakamoto. But when I saw this tweet, it really hit me.

“Winners lose more than losers ever will.” It’s a beautiful statement, and not just relating to business. When making our way through this crazy life, we need to be able to make bets, take chances, and force open doors, knowing that when we do, we may fail. Nothing more for today. Enjoy the piece!

INTERVIEW 🎙️

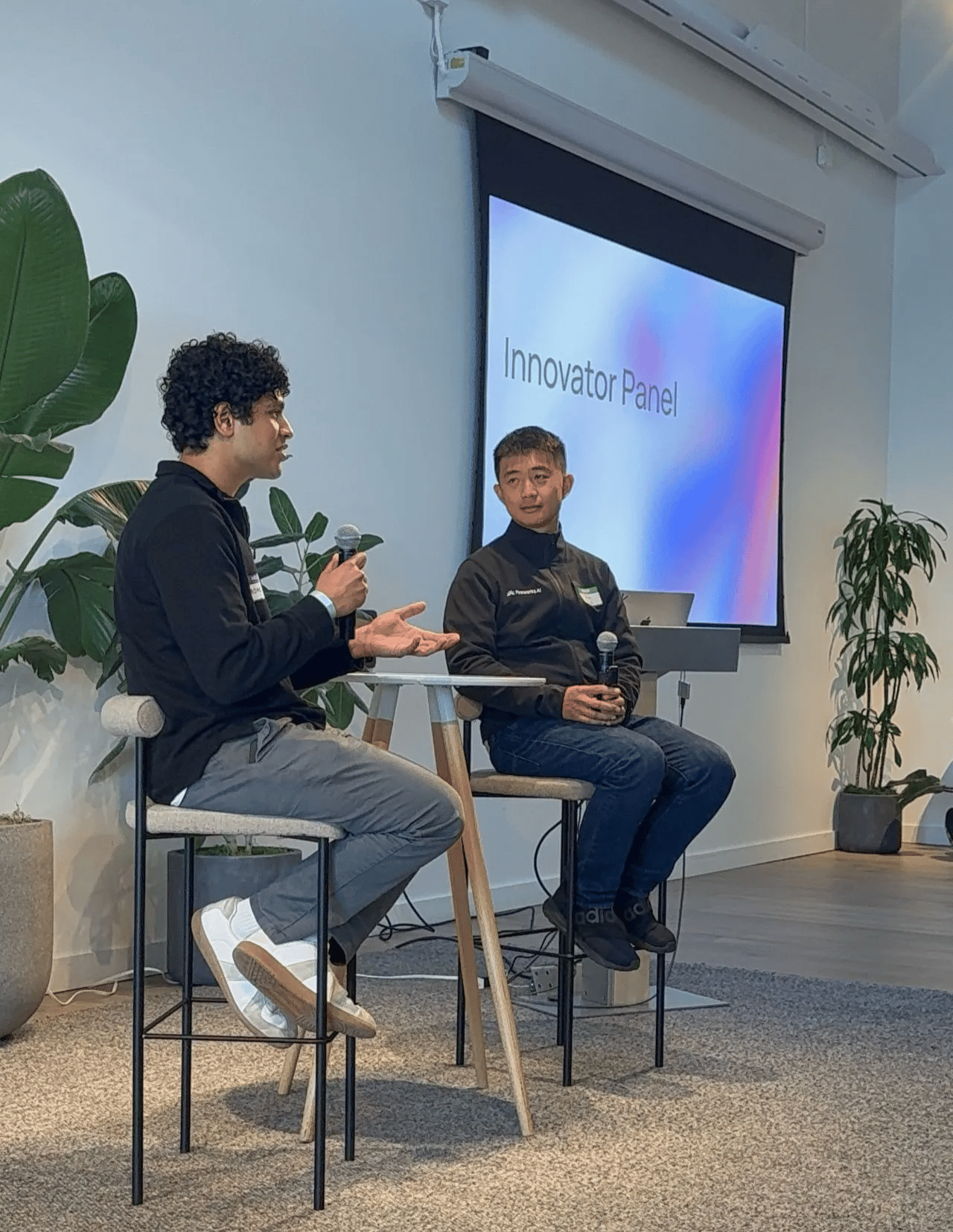

Benny Chen, Co-Founder at Fireworks AI

Benny Chen is Co-Founder at Fireworks AI, the infrastructure platform quietly becoming one of the most interesting bets in the AI space. A New Zealand-born, UCLA-educated engineer, Benny spent nearly a decade at Meta as Ads Infrastructure Lead before walking away to build something of his own—back in 2022, before ChatGPT was a thing, before your dad knew what a large language model was, and before anyone was talking about gigawatts of compute.

The company he helped build alongside CEO Lin Qiao and a crew of fellow Meta and PyTorch alumni has since raised over $300M from Benchmark, Sequoia, Lightspeed, and Index, and is now valued at $4B. Their clients include Uber, DoorDash, Notion, and Quora. Not bad for a team that, at one point, had no front-end engineer and a website Benny himself describes as ‘very ugly.’ In this interview, Benny gets into the nuts and bolts of model customization, what most companies are still getting wrong with AI, and what it actually takes to compete with the giants.

What’s the problem you're trying to solve, and why?

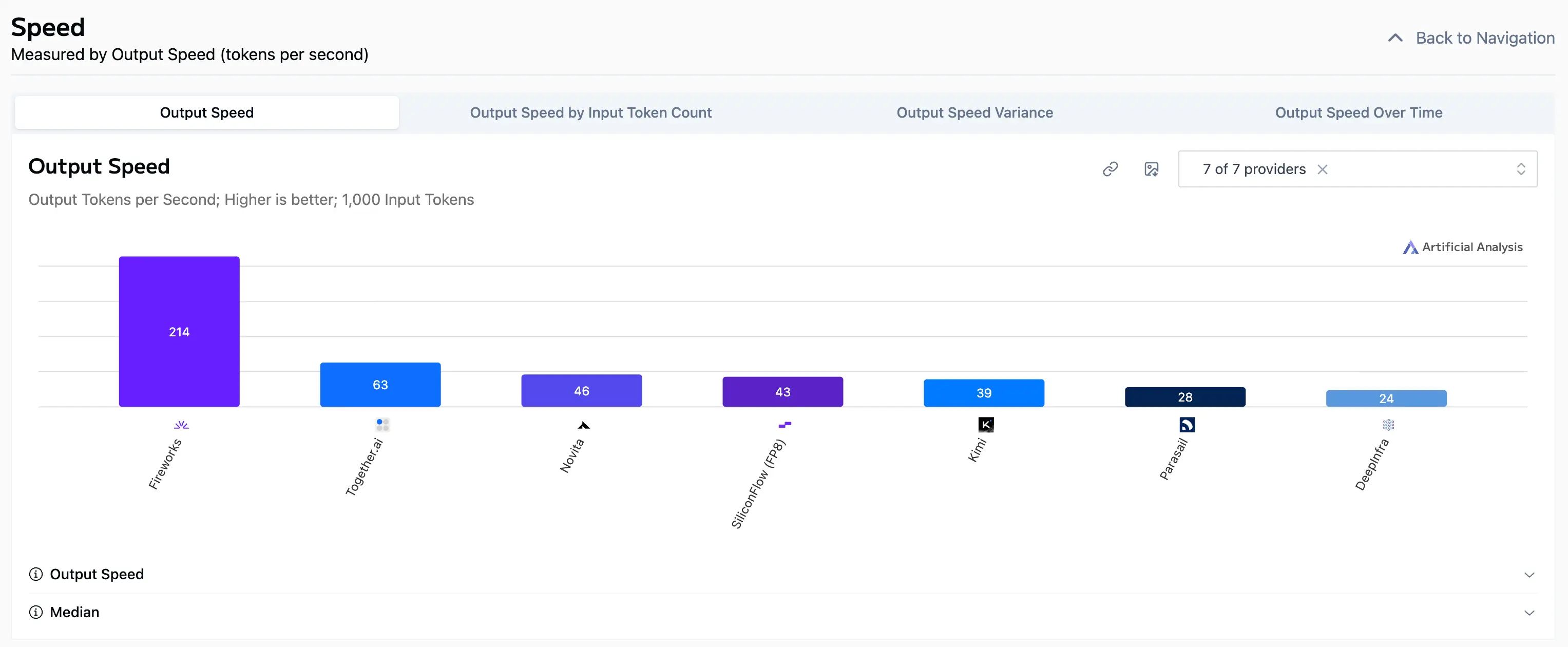

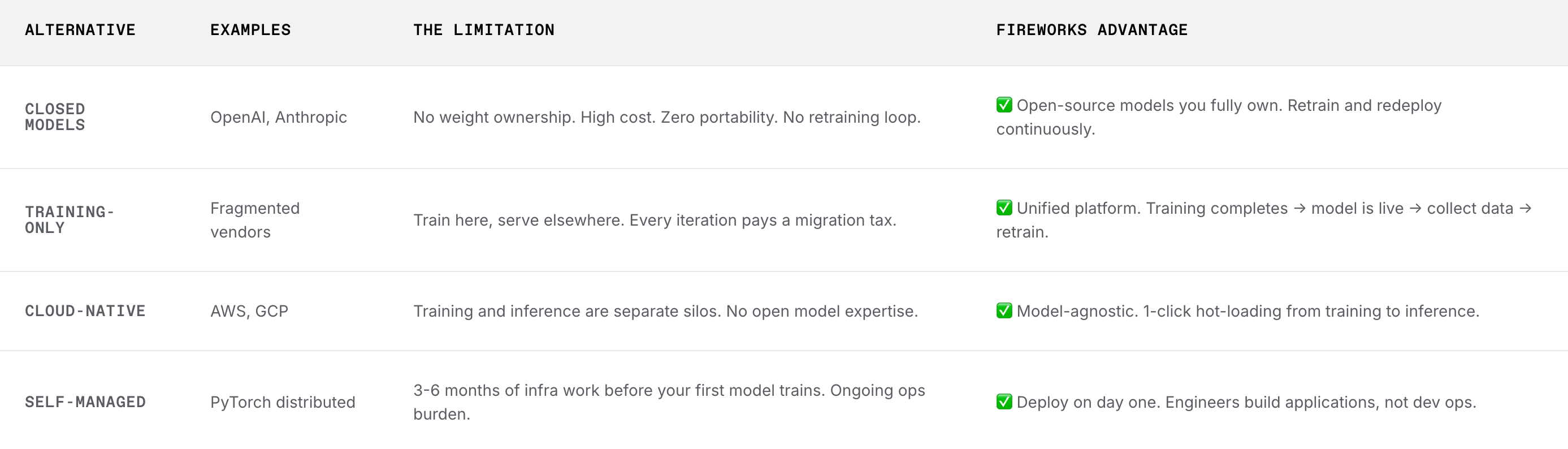

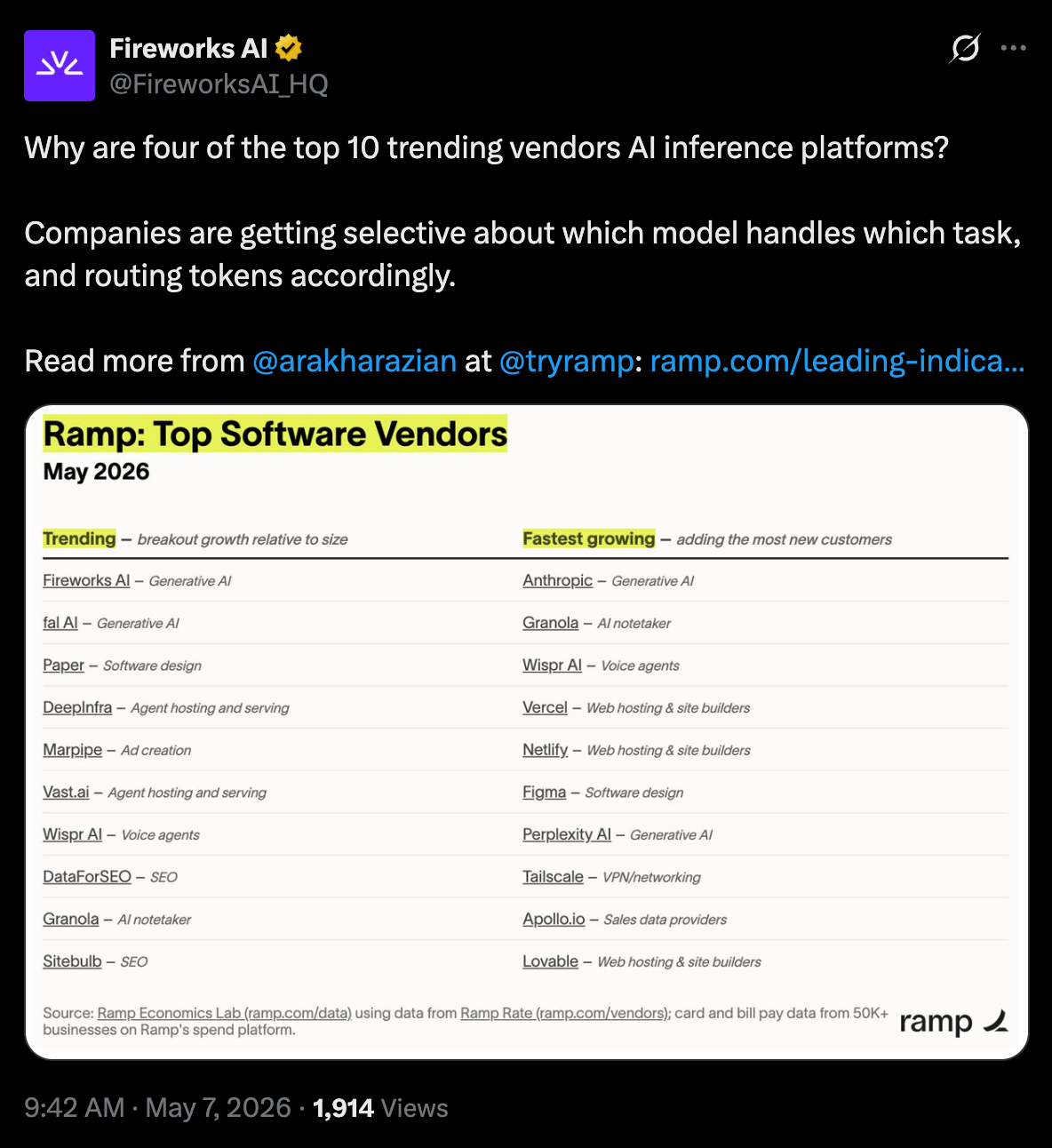

We want to help people bring down their total cost of ownership for their tokens. We care about this because we believe the demand for tokens is going to go through the roof, and it is very cost-prohibitive to scale those on frontier models from big labs. |  Source: Fireworks AI. |

When you ask your voice assistant to help you book a restaurant or check the weather, it doesn't need AGI-level intelligence to do that. We also work with a lot of startups that care deeply about scaling their businesses to their customers, and most companies operate within specific verticals. They don't work in settings like Gemini or ChatGPT, where the model has to know everything. Whether the agent knows which comedian told a specific joke in some obscure 1980s setup doesn't help you book a table on OpenTable. So we help people reduce their total cost of ownership for their tokens, primarily through model customization.

How is Fireworks providing this in a different way than competitors?

A lot of our work involves making sure that we have a great inference engine that is high quality, a great training engine that matches the quality of our inference engine, and that what we call ‘numerics’—the way that the GPU operates on these models—ends up producing consistent results. On top of that, we provide an easy on-ramp for people to customize their models.

One thing we do a lot is support reinforcement learning, which allows people to simply author an evaluator and start customizing models from there. That is very powerful, because when we were looking at model customization two years ago, it was primarily done through what we call supervised fine-tuning. Every time a conversation began, it would go something like: ‘We'd love to help you customize your model, but to do that, you need to assemble a small team of machine learning engineers who know how to define what 'good' looks like. Then we help you connect with data labelers, guide those labelers to produce a high-quality dataset, and after two months of back and forth, you can finally customize a model.’ That process is extremely time-consuming.

|  |

What we've realized, quite painfully, is that everyone working in the large language model space is stretched for time. What's really powerful about the reinforcement learning world today is that you can use a coding agent to help you write an evaluator for your application. As long as you can articulate to your coding agent what good and bad looks like, and the coding agent can produce an evaluator, you're off to the races. You can already customize a model for your specific use case.

What are the biggest problems today when customizing AI models?

There are two parts to this: what's most difficult, and what people are doing wrong. On the difficulty side, a lot of it comes down to the quality of evaluations. It is often very, very hard to clearly articulate what ‘good’ and ‘bad’ look like. One example I've seen is someone whose job is to generate beautiful HTML for a customer. Part of the evaluation might be whether the code is correct, but correctness doesn't necessarily mean good taste. So how do you articulate what good taste is? That is genuinely very difficult. People who come from a design background, or who have a strong product sense and clearly understand what good and bad looks like, have a much easier time defining that. But without that clarity of intent, it's also very hard for a coding agent to guess what you're going for.

The second difficulty is ensuring the training stack is high quality. Because we control both the training and inference stacks, it's easy for us to ensure they align consistently. But when you introduce discrepancies between the two, the model gets confused. It's like a human learning from their environment; when you trip, it hurts, and you remember that. But if you're impaired and your brain is processing things with a 500-millisecond delay, you might not learn the right lesson. That kind of delay is terrible for models.

GOATs. |

On the side of what people are doing wrong, many treat training as a completely separate, disjointed problem from their production environment. They're not doing reinforcement learning on the exact same setup they actually use. It's like training people in VR and then immediately throwing them into the real world. Training in VR is always different from the real thing. What we often encourage instead is using our open-source library, EDA Oracle, to trace directly from the production environment and perform reinforcement learning there. That way, you're not asking people to learn their job inside a VR headset in some simulated environment—they're learning on the job. It is indeed very difficult because you need to ensure your production environment doesn't have anomalies and isolate it correctly so the model doesn't, say, delete a database while it's learning. But we strongly encourage consistency and minimize discrepancies.

How do you articulate taste to a model?

The success I've seen comes from people who are strong engineers and also have really good product sense. These people can translate customer feedback into rubrics that agents can understand. That's one piece of it.

The other is making sure you give the judging model, or your reward model, the right harness to actually do its job. For example, even if you show a human a piece of HTML and ask whether it looks nice, most people can't answer that question properly because they're expecting to see a rendered image. And even when you do render it, sometimes the visible part looks fine, but when you scroll down, it's completely broken. So, figuring out how to give the model the correct setup to evaluate effectively is also very difficult.

The last thing I'd add is that certain models are better at judging certain things than others. Actually experimenting with different frontier models and seeing which one performs best as a judge for your specific scenario is a very useful exercise.

What makes a great AI product today?

On building great AI products, I think the key is to push as far as you can on product-model fit. Everyone knows OpenAI's voice assistant, for example, and there were so many people who built similar things six months or a year before it took off, but they didn't stick because the model wasn't good enough yet. Your product has to arrive at just the right time, when the model is barely good enough to do its job. When that alignment happens, it takes off.

Riding that wave is very difficult, though. You have to predict, at least roughly, what the model will look like in three months, and you have to stay very focused because you're making a contrarian bet rather than just copying another product. And I do think that simply copying another product is very, very hard to pull off, because go-to-market capability is so important these days. But if you build something new that you believe will be just barely good enough in a month or two, I think you'll get a lot of traction. We've seen a lot of people on our platform build pretty remarkable things, and it feels to me like they're betting that the model will be good enough in a few months.

Source: Fireworks AI.

On training success, for the people who have successfully trained models on our platform, I do think it requires patience from company leadership, which often doesn't come easily. A lot of our customers are very AI-forward; either their primary product is built entirely on language models, or their leadership has already bought in and is committed to training models and building a competitive edge. That level of conviction is actually very, very hard to cultivate because it costs money and time, and, as I mentioned before, everyone is running around like their hair is on fire. So it does require strong leadership and deep conviction to see it through. But we've seen many success cases where teams go all the way. They build the right team, work with us to train a model, and ultimately achieve a sustained competitive advantage.

What has been the most difficult thing going from zero to one?

At the start, we were building a PyTorch training platform because there was no ChatGPT then. We left Meta because we knew that AI infrastructure would take off. To be transparent, I did not expect it to take off at this rate. I never expected my parents to understand what I do. I used to tell them I push ads onto Instagram, and they'd say, "Sure, I see some ads on Instagram, I don't know how you do it." But now my mom is using all these AI applications to do her job. It's amazing. I don't think I ever expected this level of penetration, this level of takeoff. I just knew that infrastructure was going to take off because I could see the demand coming in for general NLP, computer vision, recommendation systems, and so on.

Source: Fireworks AI.

So when ChatGPT came out, it was important for us to decide whether to lean into generative AI, and how much. In retrospect, that pivot looks pretty straightforward. Of course, we should have just leaned into generative AI, but I do want to remind people that the open source models three years ago were Falcon and Llama 1, and you could barely hold a two-turn conversation with them. So it was a bet, and I'm glad it paid off. Going forward, I think helping people customize models more easily will be very, very important. Customizing models involves many details and many points where things can go wrong, so making that process as easy as possible, especially with coding agents today, is something we're very focused on. Though honestly, I think the jury is still out on exactly how we get there.

What are the strengths of each fund, and what makes them great?

To be transparent, our CEO, Lin, managed most of the investor relationships, so I don't have full visibility into everything. But one thing I do want to highlight is that Eric from Benchmark put in a lot of time and trust early on. When we raised in 2022, it was before ChatGPT, and none of us knew exactly how AI infrastructure would take off. We just had a strong conviction that it would. Putting that level of trust into us at that stage required a lot of soul-searching on Eric's part, I'm sure. I really appreciated that initial vote of confidence.

Source: Fireworks AI.

How do you get the best out of yourself personally and professionally?

I've had to give up a lot of things to stay efficient, and I'm not sure whether that's a good or bad thing. I have a young kid who is about 20 months old, so my schedule right now is pretty much work, take care of the baby, sleep, and repeat.

I used to play a lot of video games and ball games, and there were a lot of things I'd normally do to kill time that have fallen away. So I don't know if I have specific rituals, but what I've found very useful is being conscious about making enough time just for my own headspace, on top of everything else. Just being bored. Sitting somewhere quiet without having to worry about anything for 15 or 30 minutes, or going for a walk outside on my own. That is very, very useful for me. |  |

I think of it as a language model with the temperature turned up, exploring and seeing what you come up with. It's not really a ritual, but just taking a walk outside alone, thinking through the decisions I've made and whether they still make sense, is something I find incredibly valuable.

Extra reading / learning

Building a $4 Billion AI Infra Company - February, 2026

Inference is the New Runtime: Our Investment in Fireworks - October, 2025

And that’s it! You can follow Benny on LinkedIn or check out Fireworks AI on their website to keep up with what they’re building.

BRAIN FOOD 🧠

TOOLS WE RECOMMEND 🛠️

Every week, we highlight tools we like and those we actually use inside our business and give them an honest review. Today, we are highlighting Lightfield*—an AI-native CRM that assembles itself from your email, calendar, and meetings.

See the full set of tools we use inside of Athyna & Open Source CEO here.

HOW I CAN HELP 🥳

P.S. Want to work together?

Hiring global talent: If you’re hiring tech, business or ops talent and want to do it 80% less, check out my startup, Athyna. 🌏

See my tech stack: Find our suite of tools & resources for both this newsletter and Athyna here. 🧰

Reach an audience of tech leaders: Advertise with us if you want to get in front of founders, investors and leaders in tech. 👀

|

Reply